You can not select more than 25 topics

Topics must start with a letter or number, can include dashes ('-') and can be up to 35 characters long.

|

|

6 years ago | |

|---|---|---|

| filelists | 6 years ago | |

| text | 6 years ago | |

| LICENSE | 6 years ago | |

| README.md | 6 years ago | |

| audio_processing.py | 6 years ago | |

| data_utils.py | 6 years ago | |

| distributed.py | 6 years ago | |

| fp16_optimizer.py | 6 years ago | |

| hparams.py | 6 years ago | |

| inference.ipynb | 6 years ago | |

| layers.py | 6 years ago | |

| logger.py | 6 years ago | |

| loss_function.py | 6 years ago | |

| loss_scaler.py | 6 years ago | |

| model.py | 6 years ago | |

| multiproc.py | 6 years ago | |

| plotting_utils.py | 6 years ago | |

| requirements.txt | 6 years ago | |

| stft.py | 6 years ago | |

| tensorboard.png | 6 years ago | |

| train.py | 6 years ago | |

| utils.py | 6 years ago | |

README.md

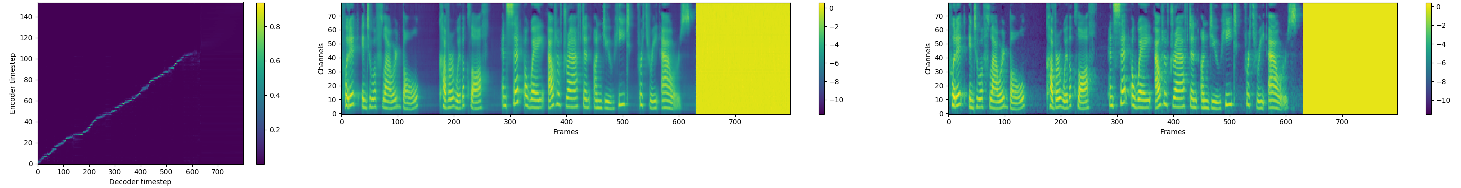

Tacotron 2 (without wavenet)

Tacotron 2 PyTorch implementation of Natural TTS Synthesis By Conditioning Wavenet On Mel Spectrogram Predictions.

This implementation includes distributed and fp16 support and uses the LJSpeech dataset.

Distributed and FP16 support relies on work by Christian Sarofeen and NVIDIA's Apex Library.

Pre-requisites

- NVIDIA GPU + CUDA cuDNN

Setup

- Download and extract the LJ Speech dataset

- Clone this repo:

git clone https://github.com/NVIDIA/tacotron2.git - CD into this repo:

cd tacotron2 - Update .wav paths:

sed -i -- 's,DUMMY,ljs_dataset_folder/wavs,g' filelists/*.txt - Install pytorch 0.4

- Install python requirements or use docker container (tbd)

- Install python requirements:

pip install requirements.txt - OR

- Docker container

(tbd)

- Install python requirements:

Training

python train.py --output_directory=outdir --log_directory=logdir- (OPTIONAL)

tensorboard --logdir=outdir/logdir

Multi-GPU (distributed) and FP16 Training

python -m multiproc train.py --output_directory=/outdir --log_directory=/logdir --hparams=distributed_run=True --fp16_run=True

Inference

jupyter notebook --ip=127.0.0.1 --port=31337- load inference.ipynb

Related repos

nv-wavenet: Faster than real-time wavenet inference

Acknowledgements

This implementation uses code from the following repos: Keith Ito, Prem Seetharaman as described in our code.

We are inspired by Ryuchi Yamamoto's Tacotron PyTorch implementation.

We are thankful to the Tacotron 2 paper authors, specially Jonathan Shen, Yuxuan Wang and Zongheng Yang.